FalCon: A Faithful Contrastive Framework for Response Generation in TableQA Systems

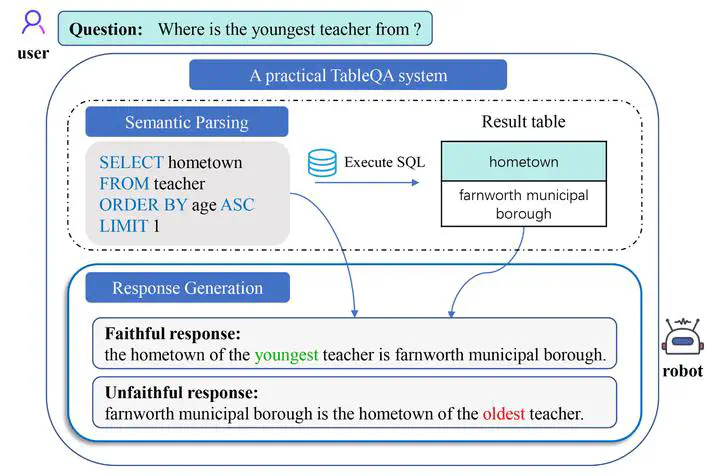

An illustration of a TableQA system.

An illustration of a TableQA system.

Abstract

In a practical TableQA system, response generation is a critical module to generate a natural language description of the SQL and the execution result. Due to the complex syntax of SQL and matching issues with table content, this task is prone to produce factual errors. In this paper, we propose FALCON, a FAithfuL CONtrastive generation framework to improve the factual correctness of generated responses. FALCON forces the generation model to identify examples with factual errors in the latent space during training and takes contrastive examples into consideration during inference. We also propose two new automatic metrics to further evaluate faithfulness specialized to this task. Experimental results show FALCON brings a favorable performance improvement on both automatic and human evaluation amongst various baseline methods.