Distilling Script Knowledge from Large Language Models for Constrained Language Planning

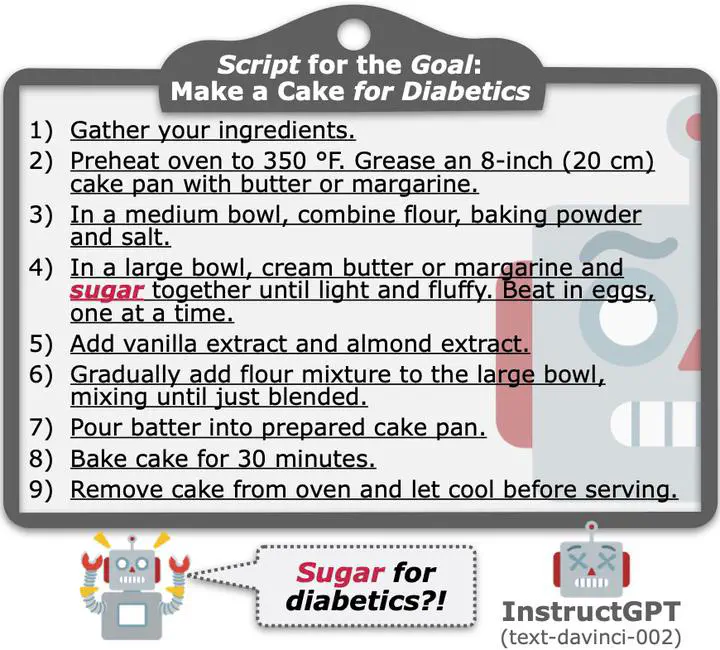

A list of steps InstructGPT generates to plan for the goal “make a cake for diabetics”.

A list of steps InstructGPT generates to plan for the goal “make a cake for diabetics”.

Abstract

In everyday life, humans often plan their actions by following step-by-step instructions in the form of goal-oriented scripts. Previous work has exploited language models (LMs) to plan for abstract goals of stereotypical activities (e.g., “make a cake”), but leaves more specific goals with multi-facet constraints understudied (e.g., “make a cake for diabetics”). In this paper, we define the task of constrained language planning for the first time. We propose an over-generate-then-filter approach to improve large language models (LLMs) on this task, and use it to distill a novel constrained language planning dataset, CoScript, which consists of 55,000 scripts. Empirical results demonstrate that our method significantly improves the constrained language planning ability of LLMs, especially on constraint faithfulness. Furthermore, CoScript is demonstrated to be quite effective in endowing smaller LMs with constrained language planning ability.